Rank Error in Your Favor

Update: Since writing this post, I repeatedly tried to delete the offending review from my profile, but Google Scholar kept re-inserting it as part of its automated trawl through its corpus of articles. The robots were determined to grant me these citations whether I wanted them or not. Finally, in January of 2018, John Fox got the citations he deserved and the error was fixed. True to form, the correction appeared out of the blue and its rationale was completely opaque.

Google Scholar is one of the most visible and widely-used examples of the rise of “impact measurement” in academia. While it is not yet used to assess people’s research as a matter of routine, I think it’s fair to say that people keep an eye on their scores and might draw on them if it seemed advantageous. Metrics and rankings have a ratchet effect. They encourage you to play along when you score well, while leaving room to deny that a serious person such as yourself would ever take such thin measures of quality seriously. Then they trap you later on when, for example, the Dean announces that no-one with an h-index of less than ten is getting tenure.

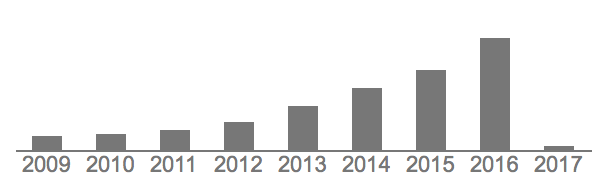

Up, up, up, up, up.

Google Scholar is also a characteristically Google-like product. Its reach is very wide, it is free and easy to use, and no-one has any detailed idea how it works. Once in a while you get an email saying a paper or two has cited your work, which is gratifying; and you can also have it send you suggested new papers to read, which is helpful. This morning I got an email letting me know that my work had been cited in the new issue of the American Journal of Botany (“Five species, many genotypes, broad phenotypic diversity: When agronomy meets functional ecology”), with another cite in a paper called “An Empirical Study of Code Smells in JavaScript Projects”, one in AOB Plants called “Non-native populations of an invasive tree outperform their native conspecifics” (who could have thought otherwise?), and yet another in a paper presented at the National Meeting of the American Society of Mining and Reclamation called “Metals in Soil and American Chestnut Tissue in Experimental Soil Treatments Plots on a Coal Mine Reclaimed Site”.

Sadly, further investigation reveals that these all appear to be errors. Entertainingly, at least some of them are attributable to what is very suddenly the most-cited paper in my Google Scholar profile:

I write a hell of a book review, let me tell you.

John Fox’s An R and S-PLUS Companion to Applied Regression is a good book, which is one of the reasons I reviewed it. But thanks to Google Scholar, we can now see that my two-page review of it has had a quite phenomenal impact over the past decade. Now, you might think Professor Fox’s book should be getting these citations, rather than my review of it. However, while reasonable, your theory cannot account for the fact that—according to some well-established, objective scholarly metrics—my book reviews are extremely widely cited. In the face of solid quantitative evidence of this sort I feel compelled to dismiss your concerns as unfounded, and indeed merely anecdotal.

A quick search—thanks again, Google!—suggests that I am not the only person to have had an overnight visit from the Citation Fairy. Some people have had publications added to their CVs, sometimes in fields they were not aware they were in, while others (sadly) seem to have suffered declines. A few lucky ducks, like me, discovered a random publication of theirs had been gifted with several thousand new citations.

By the way, one of my current projects is about the institutionalization of measurement and scoring mechanisms across social domains. Here’s a recent paper about that, co-authored with Marion Fourcade. Roughly speaking, the argument is that the tremendous engineering effort being put in to automatically measuring, scoring, and ranking systems has interesting and possibly pernicious consequences in various markets, and for stratification processes. It’s a good paper. But, I have to say, now that I unexpectedly have a book review with more than 2,500 citations, I think we were a bit pessimistic. You should go ahead and read the paper anyway. Or at least cite it.